Jetbrains' AI Assistant Review

I tried the Jetbrains AI assistant to better understand it's features and usefulness in every day code.

Recently, I decided to try out the AI assistant that’s become native in Jetbrains’ products. I use Rider for my backend code (predominantly) and WebStorm for front-endy stuff. After my initial trial ran out, I realised I hadn’t really gotten a good chance to use it so I forked out for the subscription and thought I’d do a bit of a review of my findings.

What model is it running?

It has been claimed that when asked what version of GPT the assistant is based on that it answered “GPT-3”. Jetbrains reassured people that they will use whatever model is best suited to helping their customers and (at the time of writing this post) it is powered by GPT-4 and that this is a known bug in GPT-4.

When I asked it the same question more recently, I got the below response:

|

|

I think another reason for the confusion is that it can get expensive, quickly, when using GPT-4. Thus, when we see the fairly low price point for the AI assistant (roughly £9 on a monthly recurring subscription) it is natural to wonder what we’re paying for.

I did reach out to Jetbrains on it and got a great response:

|

|

This makes total sense given that in-editor you want to be able to give advice and code-ahead quicky, while chatting to the assistant you are aware that it might take a few seconds. Kudos to Ivan at Jetbrains for sending me the above.

Comparisons

I decided to do some simple comparisons as well. The prompt is simple enough:

Can you provide me with regex to detect italic strings in markdown?

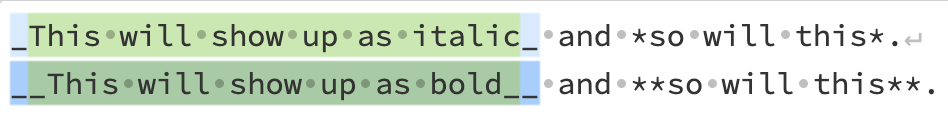

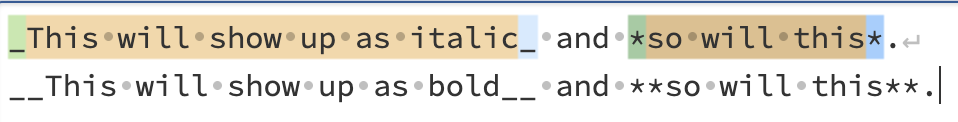

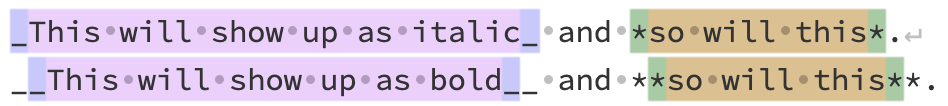

To explain, in markdown we indicate italic text using either single asterisks or underscores as follows:

|

|

Seems simple enough but there is some further complexity for the parser when we take into account that bold text is denoted by double asterisks or underscores:

|

|

Let’s see what various models return for us:

GPT-3.5

Model returns the below along with some basic explanations of the regex:

|

|

It should be noted that it also suggests further refining the request as this might not cover all our scenarios.

This is quite rudimentary and clearly leaves out asterisk matching. Here’s what we get when we try match it with our simple markdown example on regex101:

As predicted, it ignores the asterisks but also matches the double underscored bold text. Not ideal.

GPT-4

Model returns the below:

|

|

This time it gives a very in-depth explanation of what each group matches and explains how some look-aheads and look-behinds are necessary to avoid matching text that is intended for bold as well.

Looking at the matches, we see that it’s matched correctly and ignores the text intended to be bold.

Jetbrains AI

Model returns the below:

|

|

No explanation is given though it notes that it may not cover all edge cases. It’s the lack of an explanation that I found interesting. This could be telling that the prompt is designed so that the assistant trusts you know how to read regex and doesn’t need to hold your hand in that regard. More on this later

Let’s look at the matches:

Okay, so we have a different result from the others altogether, this time it seemed to attempt at taking doubled asterisks/underscores into account but without the look-ahead and look-behind it doesn’t quite work out that way.

Now let’s dig into some cool things it can do.

Auto-completion

Jetbrains’ AI assistant also uses ghost text to give coding suggestions, similar to Copilot.

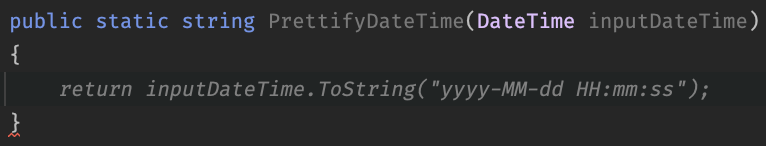

As an example, I typed in public static string PrettifyDateTime(string inputDateTime) and it suggests the below:

I mean, that’s just formatting a date and time, fair enough. What I liked was the speed with which is suggested this, I had barely finished typing the opening curly brace and the suggestion was there. Simply hit tab to complete it.

We can also prompt the assistant Implement given function: PrettifyDateTime and it will give us a bigger explanation of it’s implementation…but also change it’s implementation!

AI Assistant: You can implement the PrettifyDateTime method by converting the input DateTime to a string which represents the date and time information in a more human friendly manner. Here it is in C#:

|

|

This function will return a string representing the date and time in long date and short time pattern. It is just a suggestion, you can modify it according to your requirements. Also, please note that I’ve used the “f” format string to format the date. This string represents a combination of the long date (“D”) and short time (“t”) patterns, separated by a space. You can use any other standard or custom format strings that suit your needs. Refer to the DateTime.ToString Method documentation for more information.

There’s actually a simple explanation for this and it might give further indication to what the underlying model is.

When we’re looking for auto-completion of code, we need something that can make quick suggestions. GPT-4, as an example, won’t be fast enough to suggest something before the user already starts entering code and thus interrupting the user flow. Imagine if your Intellisense auto-complete takes a few seconds before it gives you suggestions? I shudder at the thought.

Further prompting

What if, using the above context we prompted it a bit further? I tried it out, in an attempt to get a function that would return something along the lines of “6 days ago”, similar to a function I had recently written for my blog.

I asked, “Can you make it return shorthand like ‘x days ago’?” and it returned the following bit of code:

|

|

Which is pretty good! Outside of the fact that any future date would return “0 years ago”, it’s done quite well.

Commit Summarisation

I’m more comfortable using version control in a terminal but this feature could well swing me the other way - after all, the less reason to leave the safe space that is your IDE, the better, right?

In my blog project I had a boolean property called IsPage in the metadata for each blog post. The idea being that with that flag at true, the code would treat it as a page on it’s own instead of a blog post. I removed this for now and I also added a ShowContents boolean that when enabled will display a dynamically created table of contents based on the headers of a page. Normally my commit message would be fairly short, something like removed IsPage and added ShowContents". In part because I’m lazy, but also for reasons that I’m too lazy to get into.

Now, I get this nifty button:

I hit that button, which tells the assistant to generate a commit message for me and this is what it comes up with:

|

|

It took all of 2 seconds impressively. This was only for minor changes in a single file.

I edited several more and hit it again, for any one curious out there:

|

|

In fairness, it is highlighting the major changes that I made, the only one it’s missed is a CSS change in a cshtml file.

Context Actions

Below is my original implementation of the date time format “x days ago”. Let’s see what the AI can do for us in this particular case.

|

|

First we select the code, right click, and we’re presented with this context menu:

Code Explanation

Selecting “Explain Code” drops us into the assistant’s window, asking us to explain the code we have selected and it’s pretty spot on in it’s response:

|

|

It then goes on to explain every case in the switch statement before ending with this little nugget:

|

|

Find Problems

When asked to find problems, it actually finds quite a few:

There are few potential problems with the provided GetFriendlyDateTime method:

- The method uses DateTime.Now to calculate the difference. This will use the local time of the server. If your application is global, this could lead to incorrect results due to timezone differences. It is recommended to use DateTime.UtcNow instead.

|

|

- The way months and weeks are calculated may not be very accurate. Days in a month vary from 28 to 31 days, computation using 30 every time may not provide accurate results. Weeks are considered as lasting exactly 7 days, which might not be the case due to Daylight Saving Time transitions. If it is critical for your application to have these values precise, consider using more refined calculation or different method.

- The _ in the switch expression is treated as a catch-all clause. As per the existing logic, it would be executed every time dateDifference.Days is more than or equal to 365. So “More than a year ago” could be also 2 years ago, 5 years ago etc.

- The method does not handle negative values. There may be occasions when the DateTime provided may be in the future compared to the current DateTime, resulting in a negative TimeSpan. This may cause unexpected results. To address the above issues, you could add an initial check to ensure dateTime is not in the future, and refine weeks and months calculations:

|

|

Outside of drawing attention to how lazy I can be, this is actually incredibly helpful. What I find especially interesting is the way it’s picked up on the differences in the numbers, I’ve had notoriously interesting prompts with GPT and other models where it outright struggled with numbers at times even convincing itself that 2+2=5.

Refactoring

I rewrite my own code, a lot. I have asked ChatGPT in the past to rewrite methods for me, purely as a thought exercise as it often lacks the context to really improve on things.

After implementing the fixes suggested above, I asked it to refactor the code. It suggested extracting methods for each case statement, something that makes sense from a readability standpoint but I’m not overly fond of it. I find there’s a balance between readability and complexity when it comes to something so simple.

Something I do love is this button you get at the top of the refactored suggestion

which does exactly what it sounds like, it opens a diff and allows you to apply the code. With larger chunks of code you can easily cherry pick if you don’t want all the changes.

Write Documentation

Pretty self-explanatory. Really useful for writing XML documentation that can be picked up by automation to auto-generate help files for your code or to simply fill in some information for Intellisense (code completion) to pass along.

Here’s what it generated, without any hassle:

|

|

In-line Assistance

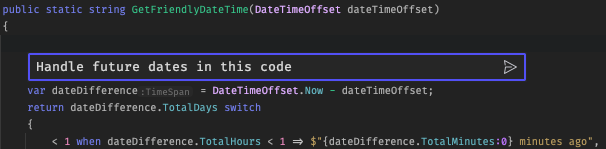

All of the above is available is available by simply hitting cmd-\ to get an inline prompt:

Now you can ask it to generate or explain code, you can even just use it as a rubber ducky to throw questions at.

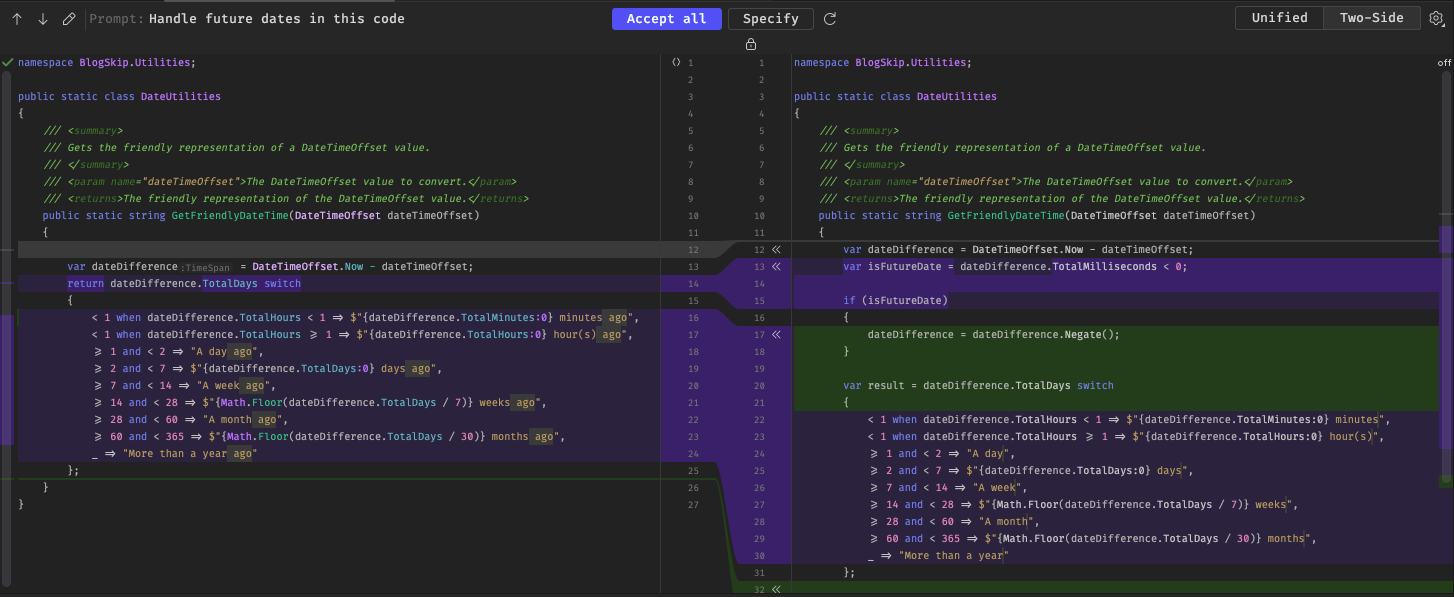

In this instance, I asked it to handle future dates in this code. The IDE opened a side-by-side comparison window where I could see the AI iterate over my code for a bit before ultimately suggesting some refactored code:

This was really cool to see in action and showcases how useful it can be to have the assistant be a part of the IDE.

Prompt details

One feature I appreciate is that you can dump your prompts. This means we can inspect just how these prompts are formed to begin with. I dumped the prompt from the prettify request earlier and found the following on the first few lines:

|

|

Looks some simple metadata identifying the chat.

|

|

Now we’re getting into some interesting bits.

|

|

This is the context that gets passed to the assistant so it better understands your environment.

|

|

One of my favourite bits. Obviously this immediately smells like they’re just protecting their product, which they’re more than allowed to do, but if you think about it, it’s also to make your life easier. You don’t need the assistant telling “Open this file in WebStorm and edit it there instead.”

|

|

This is the initial prompt from the user, only notice that it’s also attached the file you’re working on. This expands the context quite a bit. It’s not project-wide context, but given the costs of passing the much data, I don’t blame it. Besides, if we’re following SOLID principles this should be fine, right? Right?

Other Jetbrains products

I tried the assistant in in WebStorm and PyCharm and it’s similarly implemented in both of those and functions in much the same way. I did have a couple of crashes in WebStorm but that could well be the assistant keeling over upon inspecting my front-end code.

The last Jetbrains product I use is DataGrip. It’s also the only product I tested that include the AI Assistant plugin automatically with the latest version of DataGrip. I suspect this is simply due to the fact there’s a strong potential of someone working with sensitive data which should not be shared outside of the organisation. Or, perhaps it’s simply still up-and-coming (it’s included in DataLore after all).

Data Protection Concerns

Whenever a new data-processor is introduced it immediately flags concerns with data protection. This is something that’s been mitigated by turning off the AI assistant, there is no control over what happens to your data once it’s sent up.

Jetbrains does keep data sending, saving, and sharing as transparent as possible, which is great, and in 2024 they’re releasing an Enterprise license which includes an on-premise installation (among with a bunch of further features). More at https://www.jetbrains.com/ai/?plan=individuals#plans-and-pricing

Conclusion

GPT took ~2 months to reach 100 million users, smashing previous records and clearly showing us that AI is here to make an impact. The sooner we learn to utilise it to our advantage, the better. Tools like Jetbrains’ AI assistant adds massive productivity gains to my own workflow, imagine if it’s employed across an organisation. As the technology evolves and gets even better, I’d like my workflows to evolve with it. As such, I recommend at least trying it for yourself.

As we move into the future, we can hopefully stop seeing these models as something that will “steal our jobs” but rather as something that could augment it.

If you’re interested in trying it out for yourself, head over to https://www.jetbrains.com/ai/ where you can sign up for a free 7 day trial.